Driving product clarity, reducing migration failure, and creating scalable documentation systems for Atlassian Cloud

MIGRATION SAFETY & DECISION GUIDANCE · JIRA CLOUD · ATLASSIAN

Scaling trust: building soft limits for Jira Cloud migrations

Context

Jira Cloud-to-Cloud (C2C) is an Atlassian cloud product which helps customers move their product data within Jira cloud from one site to another.

This project focuses on improving migration clarity, user confidence, and support readiness for Jira C2C during this transition phase.

-

I was the first content designer to lead creating soft limits in Atlassian’s cloud offerings. My responsibilities included:

Leading strategy, design, and rollout of the guardrails framework

Coordinating across product, engineering, legal, and support

Synthesizing insights from research and technical sources

Authoring internal and external documentation

-

Product Design, Product Management, Engineering, Legal, Customer Support

-

Multiple phases - Beta stabilization to GA readiness

-

Jira C2C admins assessing migration options

Support teams handling queries

Customer-facing teams guiding customers on product selection

Problem Statement

Jira C2C was being positioned as the recommended migration tool while still in active development, with limited and evolving capabilities.

This led to:

Gap between feature capability and user expectation

Users choosing it for both small and large migrations without understanding limitations

Migration failures without clear recovery paths

Data inconsistencies for some enterprise users

Support teams lacking structured guidance

The core challenge was twofold:

How might we clarify product capability immediately while building a scalable system to manage migration risk long term?

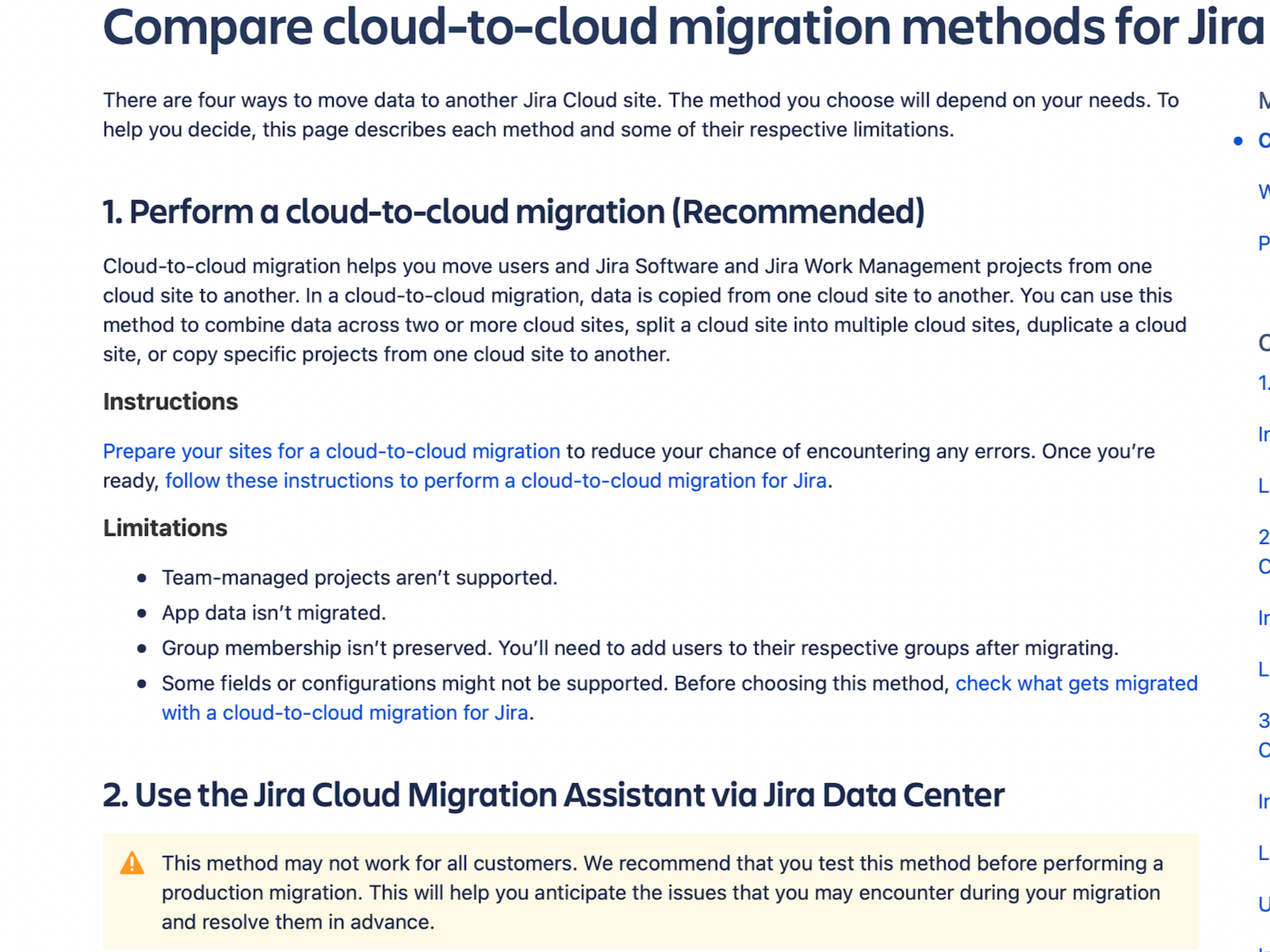

Documentation positioning Jira C2C as the recommended tool

Goal

Help customers use Jira C2C more confidently by:

clearly explaining what the feature can and cannot do

reducing migration failures through better pre-migration guidance

equipping support teams with unified and clear messaging

building a framework that could be reused for other Atlassian Cloud products

aligning with Atlassian’s north star of cloud growth through successful enterprise migrations

Approach

All the user issues needed to be tackled individually with deep design, engineering, and support collaboration.

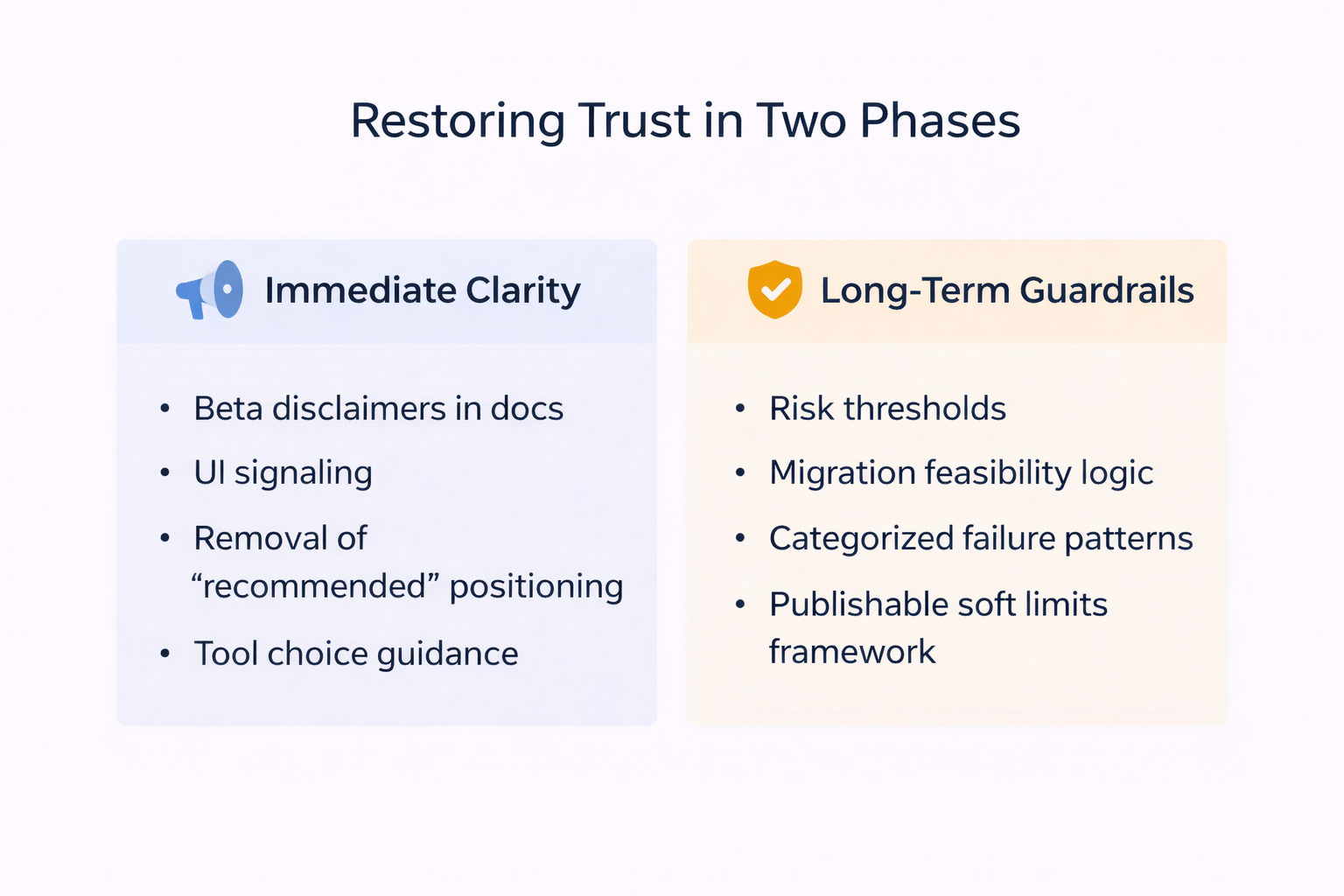

From the beginning, I structured the work into two parallel tracks.

Track 1: Immediate Clarity

Correct positioning, communicate beta scope clearly, and guide users toward the right migration method based on their data size and type.

Track 2: Long-Term Guardrails

Design a scalable guardrails framework that would proactively guide migration feasibility and reduce failure risk before users initiated a migration.

This ensured we addressed both immediate confusion and structural reliability.

What I Delivered

User-facing documentation explaining Jira C2C capabilities and limitations

A shared structure for defining and documenting migration risks

Internal support enablement documentation and FAQs

A living Confluence knowledge base used by product, engineering, and support

Updated UI and documentation with clearer beta messaging

Solution overview

I split the content strategy into three core aspects:

Clarity in choice: Enabled users to choose the right tool based on their data type and size. We focused on a more confident and informed decision-making.

Honest product positioning: Set realistic expectations before users started migrations, which reduced frustration from discovering issues too late in the flow.

Scalable frameworks and support enablement: Aligned support, engineering, and design around one unified strategy.

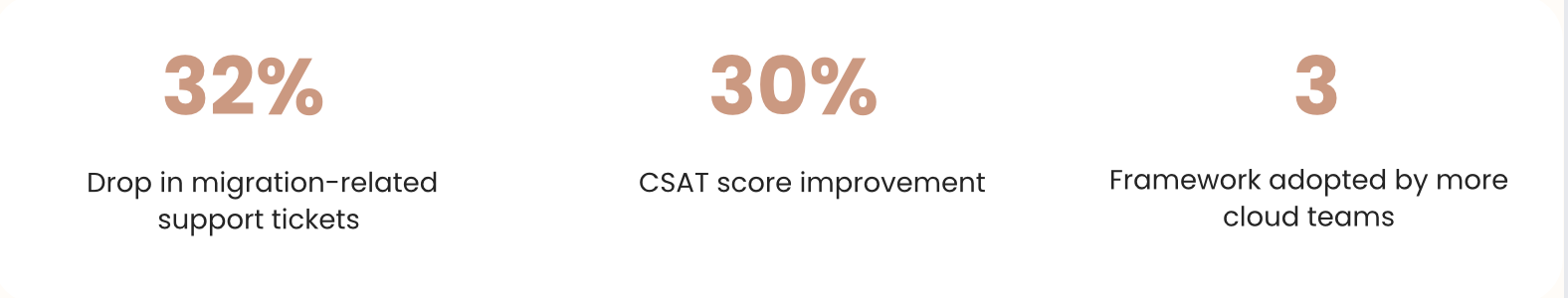

Impact

Even before formal UX metrics were available:

Support teams used the documentation directly in ticket replies

Soft limits became a go or no-go checkpoint for enterprise migrations

Users had clearer guidance before attempting large data moves

The tracker template was adopted by other teams building guardrails

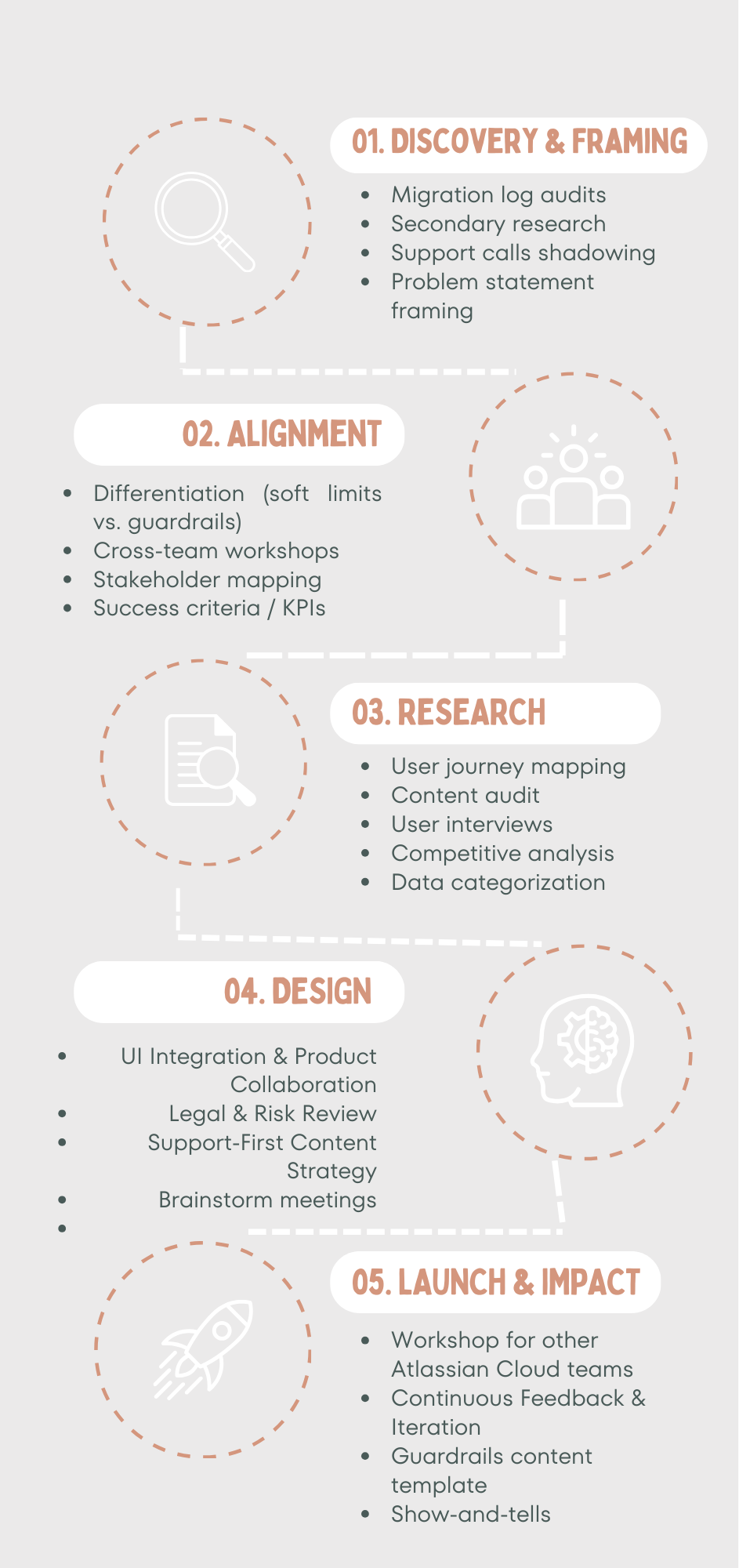

Design process

The process was non-linear, we had to revisit and refine our approach as new insights came up. This section goes into detail on how the research system was built, how patterns emerged, and how the soft limits model took shape.

Track 1: Immediate positioning correction

Documentation Audit

I audited all Jira C2C documentation and identified where:

Beta status was not visible

Capability limitations were buried or absent

The feature was positioned as “recommended” without qualification

I reviewed Atlassian content design guidelines and analyzed how other cloud products signaled beta maturity.

Interventions

We aligned on three changes:

Add a visible beta note at the top of key documentation articles

Introduce a dismissible beta capability banner in the UI

Add a beta tag next to the UI navigation label

We also removed positioning language implying GA maturity and added guidance on choosing the right migration method based on data size and type.

All updates went through multiple cross-functional reviews before publishing. This ensured users encountered capability context before initiating migration.

A version of the messaging we created for beta awareness. (This screenshot is from Confluence EAP, but the content and strategy has been taken from the Jira C2C messaging we had created)

Track 2: Designing the guardrails framework

Research & inputs

(How I built understanding)

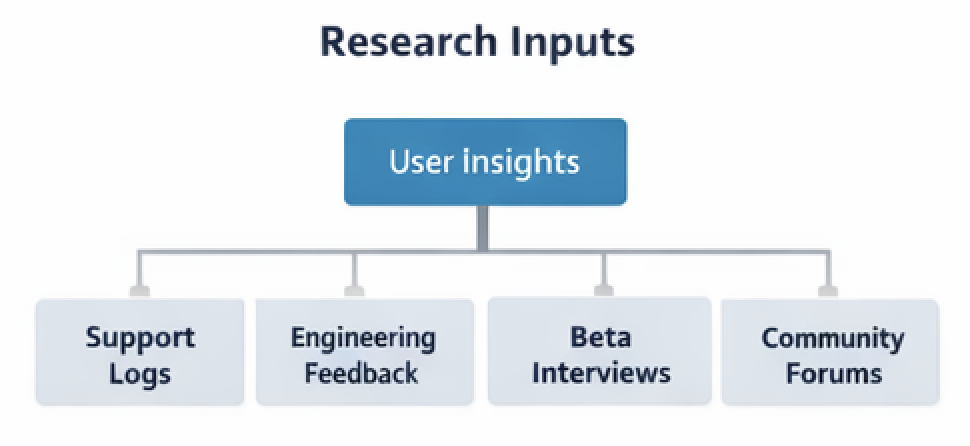

Because Jira C2C was in beta, migration failures were visible only in real customer environments. Information about issues was scattered across support, engineering, PMs, and community channels. I focused on making sense of the signals already present across the system that reflected real operational issues.

Step 1

I began by partnering with the lead content designer from the Data Center team, who had already worked on guardrails for their products. Their experience gave me valuable context on:

what success looked like

how we could adapt it for the Jira C2C use case

how did they approach this for a legacy product

I used the data centre guardrails as baseline for Jira C2C to maintain consistency across Atlassian communication.

Step 2

I then:

Set up weekly syncs with engineering, PM, and product design to gather user issue data they had collected over time

I reviewed and synthesized existing user interviews and research to identify recurring pain points and user language patterns

Broke down migration logs to make sense of the kind of data that was failing

Held deep conversations with support to understand failure patterns and the workarounds they gave to users

I synthesized these metrics to identify recurring migration risk patterns.

Key insights

Each source answered a different part of the problem:

Migrations often failed without clear reasons or recovery steps

Interviews showed user hesitation due to lack of migration status reporting, so they couldn’t tell what had succeeded or failed

Support data showed where users were struggling and how issues were being explained

Engineering logs and UI feedback were not actionable for users

Together, these inputs gave us a system-level view of both technical behavior and user perception.

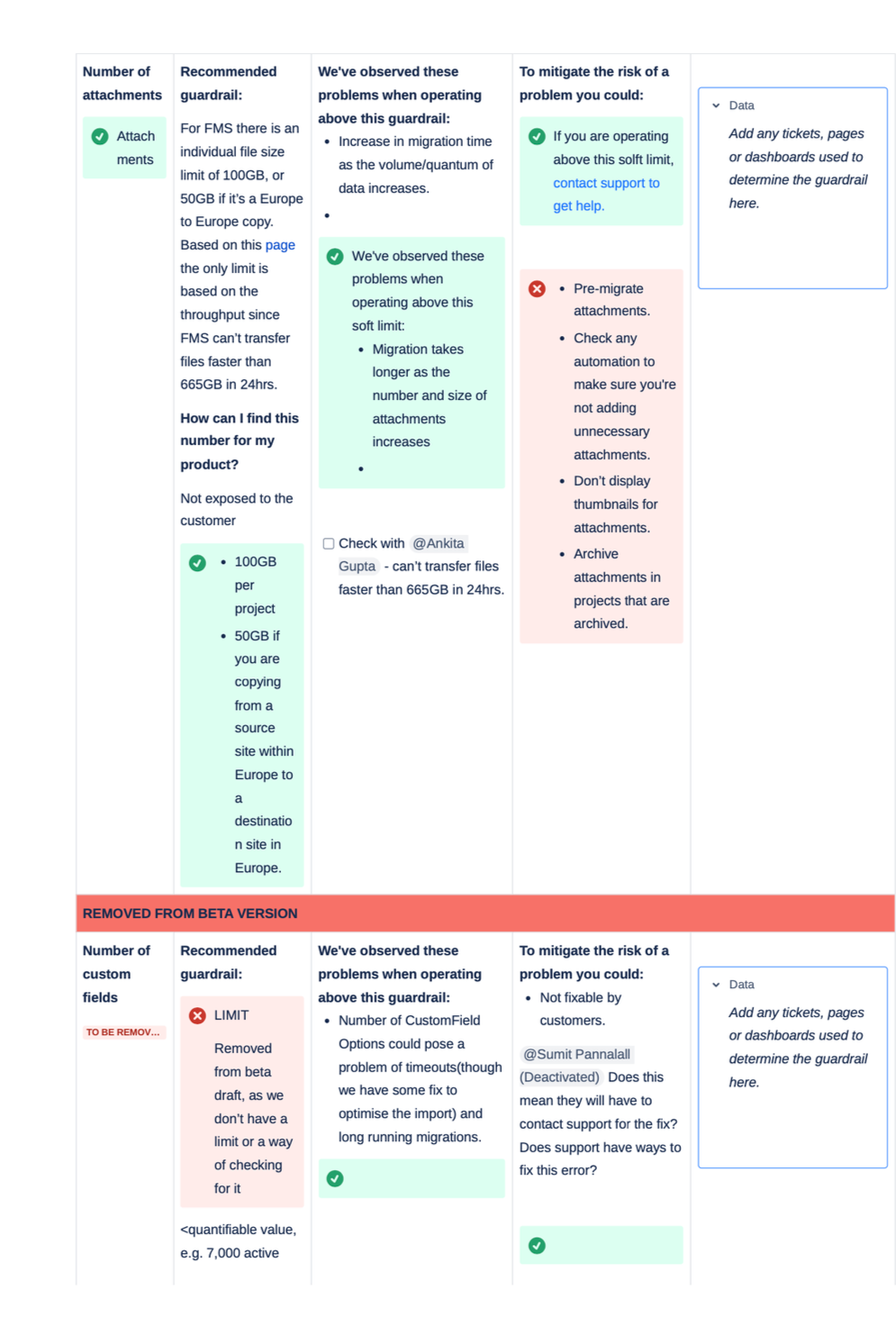

Data categorization & prioritization

To bring structure to scattered migration issues, I created a shared Confluence tracker that became the central working artifact for this project.

Structured guardrail tracker capturing risk, mitigation, and evidence

Engineering and support used this space to log:

recurring failure patterns

related Jira tickets

identified guardrails or thresholds

potential risks when user data exceeded limits

available workarounds

This gave us one visible, structured source of truth instead of fragmented signals across Slack, tickets, and support logs.

As patterns emerged, I categorized each data point based on:

affected component, such as projects, fields, comments, attachments

frequency and urgency

risk level, systemic, high risk, edge case, low risk

availability of reliable mitigation

The tracker became the foundation for prioritizing soft limits and aligning teams around migration risk.

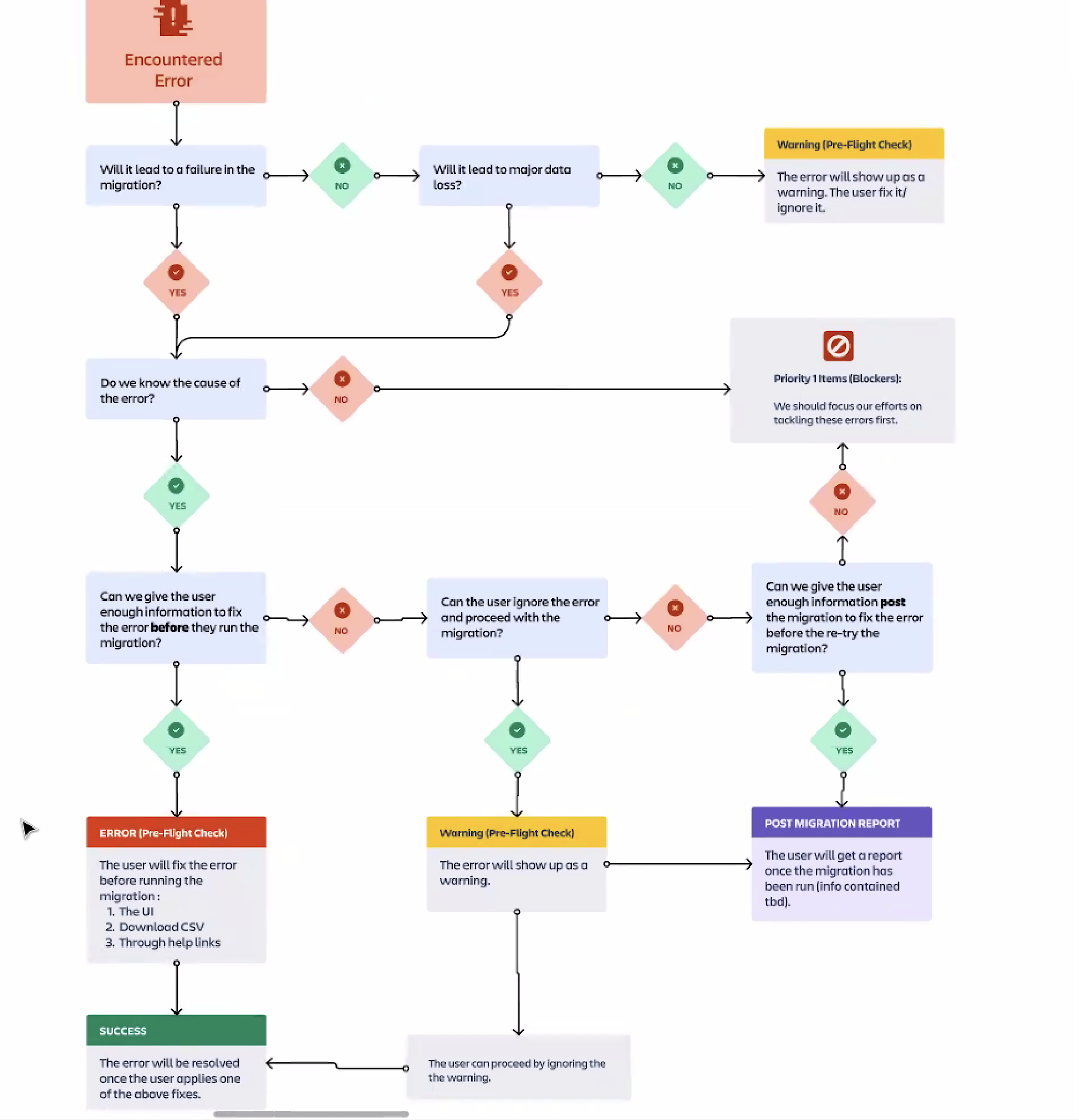

Identifying where users needed guardrails

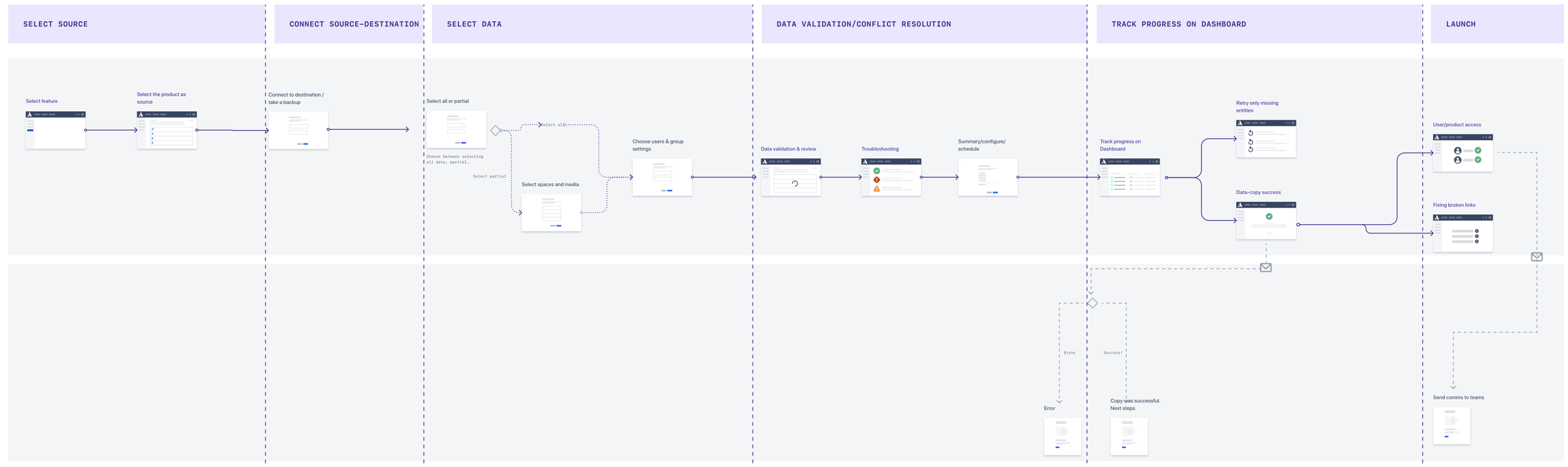

With priorities in place, I worked with the product designer and engineers to map the end-to-end migration journey.

We focused on:

what kind of errors users might encounter and where in the journey

where users were most likely to fail

where failures were invisible or hard to recover from

and how these errors should be categorized

This mapping revealed a consistent pattern: most serious breakdowns were happening before users even started migrating, during planning and decision making.

Users were making high-risk choices without knowing:

whether their data size was supported

which components were likely to fail

or what “success” looked like in a beta product

Aligning with the Journey

We were clear that aligning with the user journey will enable:

customers to make informed decisions about Jira C2C before migrating

support teams to provide consistent, aligned responses to data-related issues

engineering to track known problem areas and log coverage

designers and PMs to reference data limits when planning roadmap or UI updates

This allowed us to ship a meaningful first version while leaving room for iteration.

To operationalize this, we built a decision framework to classify migration errors.

Challenges in alignment

Initially, we faced some pushback from PMs and engineering, who had competing development priorities. To counter this, we presented:

migration failure reports from support

documentation feedback from users

screenshots from the Atlassian Community

internal business goals around cloud adoption and enterprise migration success

This helped shift the guardrails effort from “nice-to-have” to strategic priority for the next quarter.

Identifying the limits of existing guardrails

(Conceptual tension)

As we started shaping the documentation, I realised that our positioning did not fully align with existing guardrails used across Atlassian.

The Data Center guardrails model focused on long-term system health and stability in on-prem environments. Our problem space was cloud migrations, where users were trying to move large volumes of data through a system that was still evolving.

This mismatch made it difficult to reuse the same mental model and language.

I revisited the original guardrails pitch and discussed this gap with content design, support, product design, engineering.

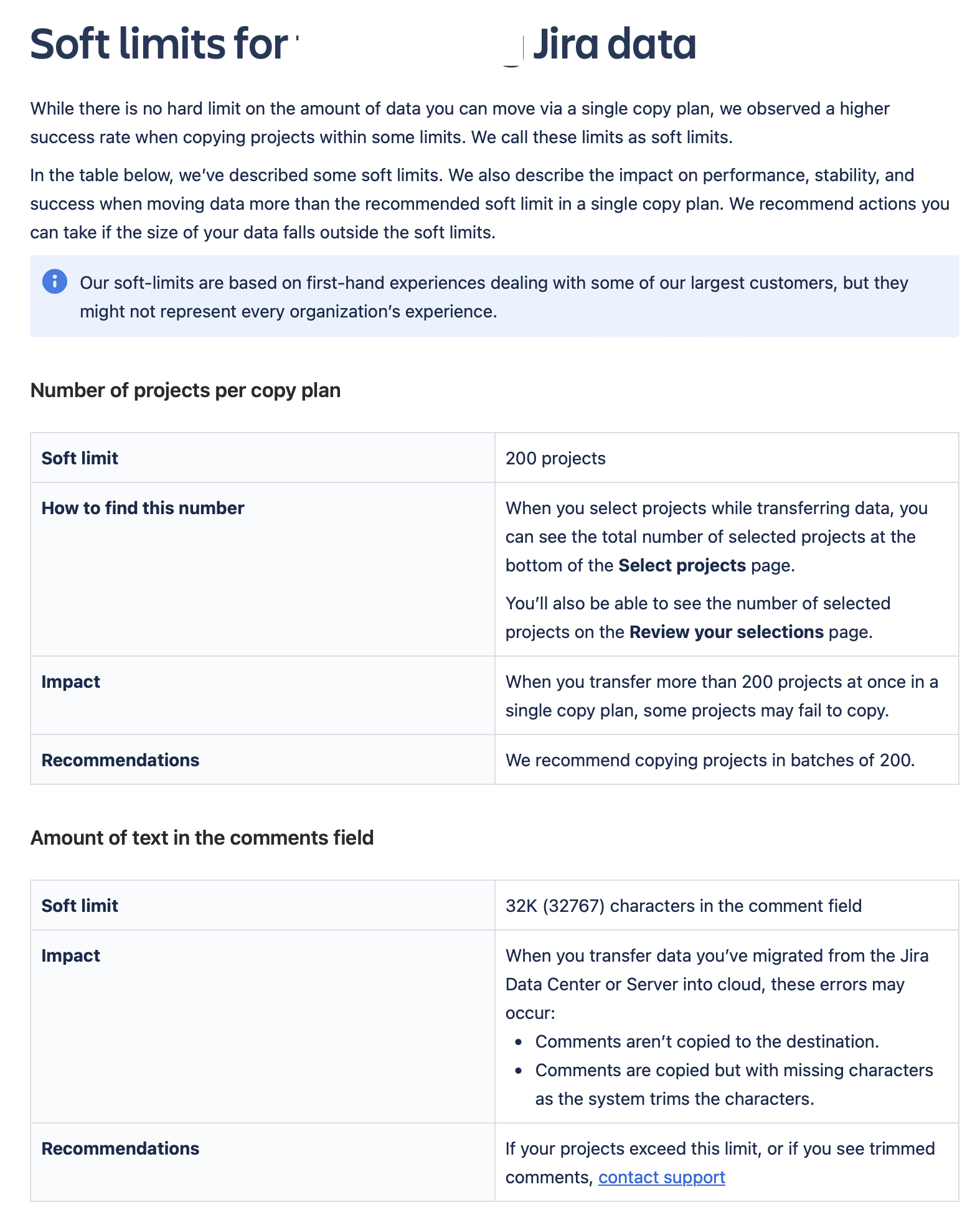

Guardrails vs. soft limits: Laying down the distinction

Based on these discussions, we formally separated the two concepts:

Guardrails address long-term system health and maintenance in on-prem environments. The desired outcome is system-wide stability.

Soft limits focus on enabling successful and efficient data movement in cloud migrations. The desired outcome is increased migration effectiveness.

This distinction shaped:

the terminology used across support macros and documentation

internal alignment across engineering, PMs, and support

Following extensive discussion, we finalized the term soft limits to reflect advisory, in-beta guidance rather than hard technical constraints.

Shaping the soft limits

With the conceptual model in place, the next challenge was defining which limits were feasible to publish and how they should be structured.

We shortlisted what made it into version1 based on:

migration components most likely to fail

features not fully supported or prone to inconsistency

common failure themes with known workarounds

areas where users had no visibility and support had no clear answers

Some potential limits were put on hold due to:

limited user data

no existing fix or workaround

legal sensitivity

Filtering data points for publishing

Data prioritisation flowchart

Documentation & design

Once structure and messaging were clear, I translated the soft limits into usable and scalable documentation.

We reused the platform team’s guardrails layout to maintain cross-product consistency, adapting tone and hierarchy for the in-beta, advisory context.

Support-First Content Strategy

Because the majority of user issues were showing up in support escalations, we created an extensive FAQ section, based on real user queries and answers already being handled manually by support.

Designing for In-Product Integration (Exploration)

In parallel, I partnered with the product designer to explore how soft limits could surface earlier within the migration flow.

We identified key decision points such as:

• tool selection

• data validation

• pre-flight checks

We mapped where advisory links, warnings, or contextual guidance could reduce late-stage failures.

While these integration patterns were still in planning when I transitioned to other projects, the exploration defined clear touch points for future product embedding.

Launch & rollout

Externally:

email to Beta users

community announcement

inclusion in Atlassian documentation hub

Internally:

support and engineering walkthroughs

show-and-tells across cloud teams

Closing reflection

This project closed a critical trust gap between product capability and user expectation.

More importantly, it showed how content design can shape product behaviour, support workflows, and long-term system clarity in complex, evolving platforms.